You'll notice pretty quickly that this one is a little different. Instead of writing about infrastructure or token standards, I want to talk about how I actually work in hopes that it might help someone reading this.

I lead business development and operations at Hgraph. We build blockchain infrastructure and solutions for startups and enterprises, cross-chain interoperability, backend ops, data indexing, APIs, bare-metal nodes, custom engineering. Our engineers are excellent, but I'm not one of them; however, the tools available now mean that doesn't limit what I can contribute.

I use Claude Cowork and Claude Code every day. They're part of how I do my job, not as a novelty, but as a core workflow. Here's what that actually looks like.

Building Presentations

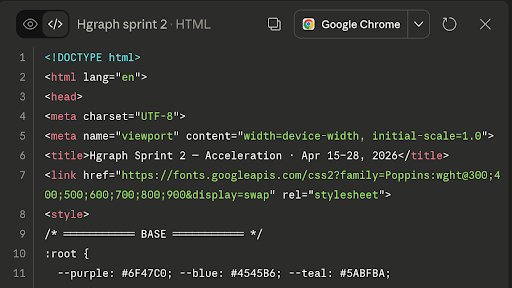

I build .html presentations for internal and external use, not PowerPoint decks. Fully designed, interactive pages I walk through in a browser. This includes, but is not limited to, strategy decks, architecture overviews, and client materials.

To keep everything on brand, I built a skill file that gives Claude access to our brand assets, colors, typography, layout rules, etc. Every presentation comes out looking like Hgraph without me having to think much about it. I describe what I need, work through the structure and content, and produce something I can put in front of anyone. That used to require pulling someone off product or client work, now it doesn't.

Connecting to Real Data

The presentations are just one piece of the puzzle. What makes this workflow powerful is connecting AI tools to live data sources.

I have Claude connected to our Linear workspace through MCP. When I'm building an internal deck or a client update, I can pull in real-time development progress, what's shipped, what's in progress, what's coming next, without asking anyone to compile a status update.

I also use the Hgraph MCP server to pull onchain data directly into my workflow. When I'm writing a blog post or building a client-facing presentation, I can query token data, transaction history, or network metrics and work them into the content. The data in our posts isn't hypothetical, it's pulled from the same APIs our clients use.

Here's a good example: McLaren's MCL/COLLECT Drop: What the Onchain Data Shows

Content and Research

Every blog post, social post, and piece of research we've published recently has gone through this workflow. I write the way I talk, the AI helps me structure it, fact-check it, and get it to a place where it's ready to publish. The article is written by me and in my voice, but AI increases the velocity of getting blog posts published.

Staying Close to the Technical Work

I'm on every call and in every product discussion. I'm not writing code, but I'm learning how things work under the hood every week. AI tools help me bridge the gap, I can ask questions, dig into technical concepts, and understand what our engineers are building at a level that would have taken me much longer to reach on my own.

Everyone Wears Every Hat

There's no clean line between roles here. On any given day, I might go from a client call scoping a cross-chain bridge, to building a presentation, to writing and reviewing a blog post, to a product conversation about what we're indexing next. That's not just me, it's almost everyone on the team, nobody is siloed. When the whole team understands both the client's problem and the product, things move faster and the output is stronger.

Why It Matters

The result of all this is better outcomes for clients. They work with a team that understands the full picture, not just one piece of it. They get materials that communicate clearly, responses that are accurate and fast, and people who are close to every part of the business.

If you're non-technical and working in a deeply technical space, you're not at a disadvantage. You just have to stay close to the work, continue learning, and use the tools available to you.